QNAP PCIe FPGA Highest Performance Accelerator Card with Arria 10 1150GX support DDR4 2400Hz 8GB, PCIe Gen3 x8 interface – Mustang-F100-A10-R10

Brand: QNAP

Call for Price

For The Immediate delivery contact the sales team. Usually, Ship in 2-3 days, backorder ship in 4-5 Weeks, images are for illustration purposes only.

Out of stock product

Call for Price

For urgent delivery, please contact sales before ordering. Orders usually ship as per the estimated delivery date in certain case backorders may take 4–12 weeks. Images are for illustration only and may differ from the actual item.

QNAP PCIe FPGA Highest Performance Accelerator Card with Arria 10 1150GX support DDR4 2400Hz 8GB, PCIe Gen3 x8 interface – Mustang-F100-A10-R10

Mustang-F100 (FPGA)

Overview

Features

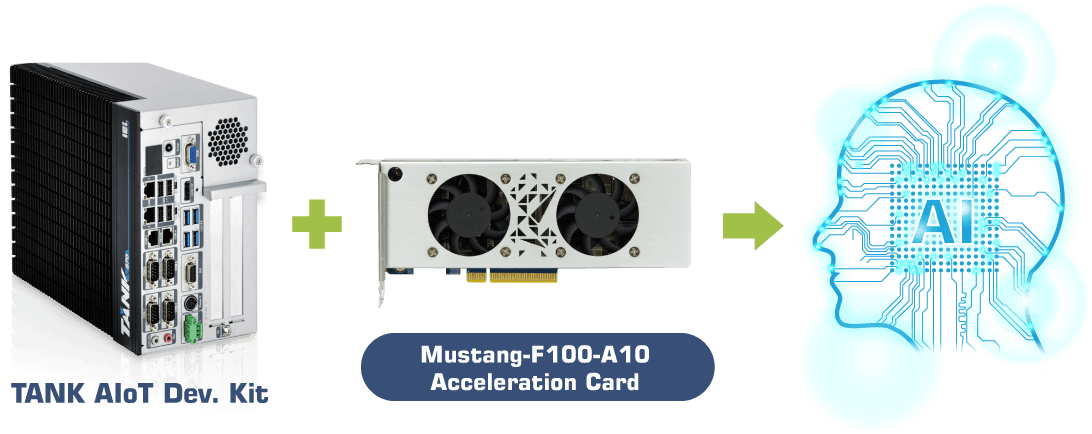

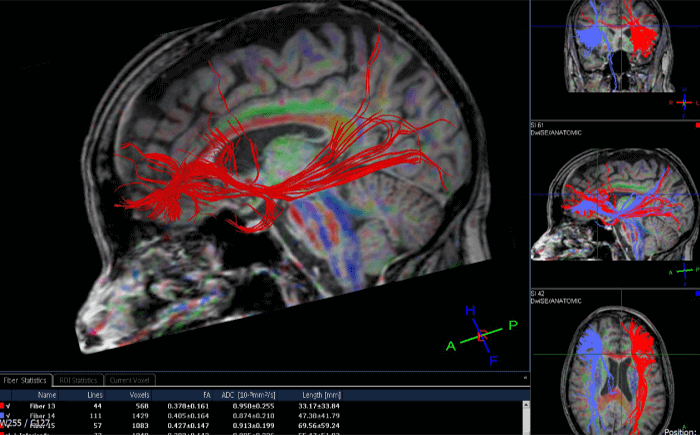

As QNAP NAS evolves to support a wider range of applications (including surveillance, virtualization, and AI) you not only need more storage space on your NAS, but also require the NAS to have greater power to optimize targeted workloads. The Mustang-F100 is a PCIe-based accelerator card using the programmable Intel® Arria® 10 FPGA that provides the performance and versatility of FPGA acceleration. It can be installed in a PC or compatible QNAP NAS to boost performance as a perfect choice for AI deep learning inference workloads. >> Difference between Mustang-F100 and Mustang-V100.

- Half-height, half-length, double-slot.

- Power-efficiency, low-latency.

- Supported OpenVINO™ toolkit, AI edge computing ready device.

- FPGAs can be optimized for different deep learning tasks.

- Intel® FPGAs supports multiple float-points and inference workloads.

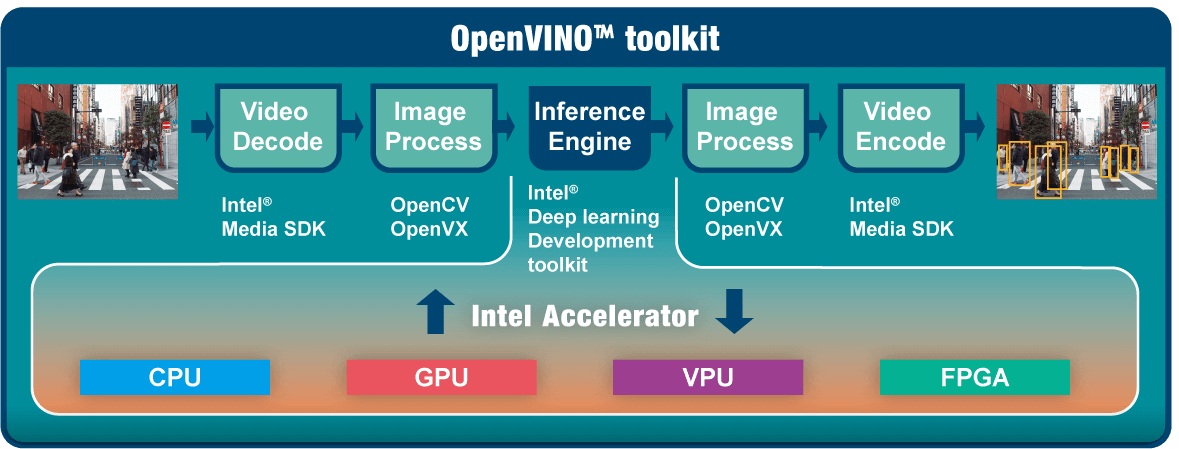

OpenVINO™ toolkit

OpenVINO™ toolkit is based on convolutional neural networks (CNN), the toolkit extends workloads across Intel® hardware and maximizes performance.

It can optimize pre-trained deep learning model such as Caffe, MXNET, Tensorflow into IR binary file then execute the inference engine across Intel®-hardware heterogeneously such as CPU, GPU, Intel® Movidius™ Neural Compute Stick, and FPGA.

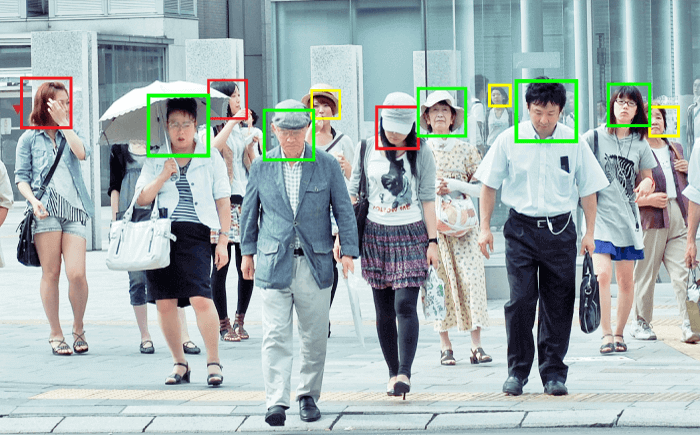

Get deep learning acceleration on Intel-based Server/PC

You can insert the Mustang-F100 into a PC/workstation running Linux® (Ubuntu®) to acquire computational acceleration for optimal application performance such as deep learning inference, video streaming, and data center. As an ideal acceleration solution for real-time AI inference, the Mustang-F100 can also work with Intel® OpenVINO™ toolkit to optimize inference workloads for image classification and computer vision.

- Operating Systems

Ubuntu 16.04.3 LTS 64-bit, CentOS 7.4 64-bit, Windows 10 (More OS are coming soon) - OpenVINO™ toolkit

- Intel® Deep Learning Deployment Toolkit

- – Model Optimizer

- – Inference Engine

- Optimized computer vision libraries

- Intel® Media SDK

*OpenCL™ graphics drivers and runtimes. - Current Supported Topologies: AlexNet, GoogleNet, Tiny Yolo, LeNet, SqueezeNet, VGG16, ResNet (more variants are coming soon)

- Intel® FPGA Deep Learning Acceleration Suite

- Intel® Deep Learning Deployment Toolkit

- High flexibility, Mustang-F100-A10 develop on OpenVINO™ toolkit structure which allows trained data such as Caffe, TensorFlow, and MXNet to execute on it after convert to optimized IR.

*OpenCL™ is the trademark of Apple Inc. used by permission by Khronos

QNAP NAS as an Inference Server

OpenVINO™ toolkit extends workloads across Intel® hardware (including accelerators) and maximizes performance. When used with QNAP’s OpenVINO™ Workflow Consolidation Tool, the Intel®-based QNAP NAS presents an ideal Inference Server that assists organizations in quickly building an inference system. Providing a model optimizer and inference engine, the OpenVINO™ toolkit is easy to use and flexible for high-performance, low-latency computer vision that improves deep learning inference. AI developers can deploy trained models on a QNAP NAS for inference, and install the Mustang-F100 to achieve optimal performance for running inference.

Learn More: OpenVINO™ Workflow Consolidation Tool

Note:

1. QTS 4.4.0 (or later) and OWCT v1.1.0 are required for the QNAP NAS.

2. To use FPGA card computing on the QNAP NAS, the VM pass-through function will be disabled. To avoid potential data loss, make sure that all ongoing NAS tasks are finished before restarting.

Easy-to-manage Inference Engine with QNAP OWCT

Note:

1. Install the Mustang-F100 into the PCIe Gen3 x8 slot of the NAS. Check Compatibility List for more supported models.

2. Installing “Mustang Card User Driver” in the QTS App Center is required.

Applications

Dimensions

Note:

Up to 8 cards can be supported with operating systems other than QTS; QNAP TS-2888X NAS supports up to 4 cards. Please assign a card ID number (from 0 to 7) to the Mustang-F100 by using rotary switch manually. The card ID number assigned here will be shown on the LED display of the card after power-up.

Short Tech Specification

| PCIe FPGA Highest Performance Accelerator Card with Arria 10 1150GX support DDR4 2400Hz 8GB, PCIe Gen3 x8 interface |

Detailed Tech Specification

Hardware Specs

PCIe FPGA Highest Performance Accelerator Card with Arria 10 1150GX support DDR4 2400Hz 8GB

| Computing Accelerator Cards | FPGA |

| Main MPGA | Intel® Arria® 10 GX1150 FPGA |

| Operating System | PC: Ubuntu 16.04.3 LTS 64-bit, CentOS 7.4 64-bit, Windows 10 (More OS are coming soon) NAS: QTS (Installing “Mustang Card User Driver” in the QTS App Center is required.) |

| Voltage Regulator and Power Supply | Intel® Enpirion® Power Solutions |

| Memory | 8G on board DDR4 |

| Interface | PCI Express x8 Compliant with PCI Express Specification V3.0 |

| Power Consumption (W) | <60W |

| Operating Temperature & Relative Humidity | 5°C~60°C (ambient temperature),5% ~ 90% |

| Cooling | Active fan: (50, 50, 10 mm) x 2 |

| Dimensions | 169.5, 68.7, 33.7 |

| Power Connector | *Preserved PCIe 6-pin 12V external power |

| Dip Switch/LED indicator | Up to 8 cards can be supported with operating systems other than QTS; QNAP TS-2888X NAS supports up to 4 cards. Please assign a card ID number (from 0 to 7) to the Mustang-F100 by using rotary switch manually. The card ID number assigned here will be shown on the LED display of the card after power-up. |

| Note | *Standard PCIe slot provides 75W power, this feature is preserved for user in case of different system configuration. |

Reviews

There are no reviews yet.